In 2026, companies are aiming for agentic infrastructure optimisation. This means instead of a human monitoring an agent 24/7 to make sure it doesn’t slip up, the goal is for autonomous agents to actually be trusted to reliably manage, scale and optimise IT infrastructure on their own.

Harness engineering has emerged as prompt-based agents alone are no longer enough to satisfy organisations’ ambitions. Traditional automation, which follows fixed scripts, performs well at best-case scenarios, but crumbles when confronted with unexpected errors. Forward-looking IT departments are entering a new wave of self-managing environments that proactively adapt.

But agentic infrastructure optimisation is a high stakes area. Models can go astray and hallucinate. Like a horse, it needs to be strapped in with a harness to keep it under control – that’s where harness engineering gets its name.

To achieve this, enterprises are choosing harness engineering solutions. FLock.io – a leading privacy-preserving AI solutions provider – has switched from simple prompt engineering and basic agents to harness engineering. This highly technical blog, aimed at engineers, tells the story of FLock.io’s journey to that decision.

[ 👋 Hi there! If you’re here to find out more about FLock.io, follow us on X, read our docs, sign up to AI Arena on train.flock.io and email us at hello@flock.io. We just launched FOMO, completing our full-cycle decentralised AI platform.]

Harness engineering explained

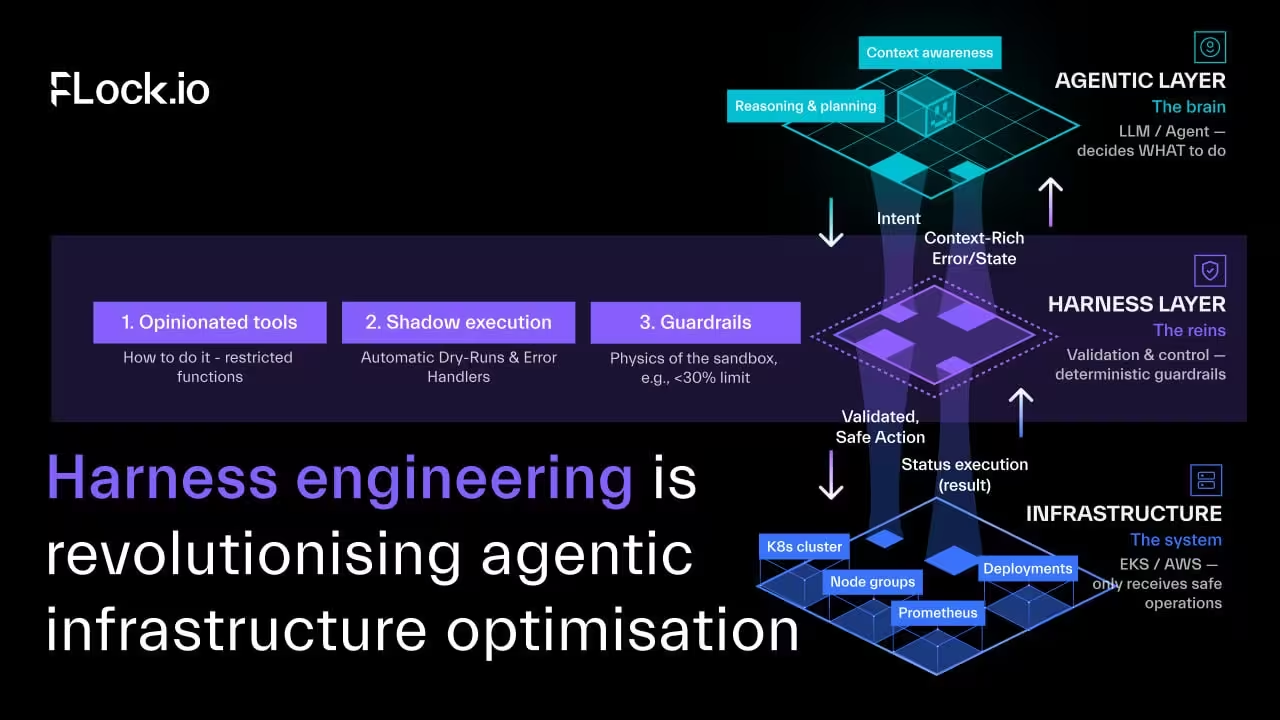

The name of harness engineering comes from horseriding – a horse is powerful, but without reins it might run off in all sorts of directions. Likewise, an engineer can wrap a harness around a model to keep it in check, namely the infrastructure you build around the model (systems, guardrails, testing, tools, constraints and feedback loops). The goal is to ensure AI agents deliver reliable, production-grade performance.

It contrasts with prompt-based agents, which might work 95% of the time but fail to adapt when a critical API call fails.

With harness engineering, the model isn’t acting directly on infrastructure, but operating through a validation and control layer.

Giving AI agents autonomy comes with risks

The dream for infrastructure optimisation is an autonomous Site Reliability Engineer (SRE) that monitors Kubernetes (e.g., EKS) clusters, digests metrics (e.g., Prometheus), analyses communications (e.g., Slack), and dynamically optimises resources for cost savings.

However, the risk is severe: an LLM error, such as a hallucinated YAML or aggressive node termination, can cause catastrophic downtime for our backend. To address this, we will detail harness engineering’s application for safe EKS cluster optimisation.

FLock.io came across 3 critical failures

In our early iterations, FLock.io built agents using standard frameworks (like early LangGraph or AutoGen setups). We gave the agent a persona (“You are an expert AWS SRE”), strict instructions (“Do not scale down core services”), and direct access to underlying APIs like kubectl apply and AWS CLI.

We quickly ran into three critical failure modes:

- The syntax death spiral:

The agent would occasionally generate a malformed YAML file. kubectl would throw a massive stack trace, confusing the agent, which would then enter a retry loop until it exhausted its token limit and crashed.

- Unbounded blast radius:

Despite prompt constraints, the agent might decide that a service with 5% CPU utilisation should immediately have its nodes slashed by 80%, completely ignoring Graceful Shutdowns or Pod Disruption Budgets (PDBs).

- Fragile constraints:

Prompt-based guardrails are fundamentally weak. You are relying on the LLM’s probabilistic reasoning to consistently remember and adhere to safety rules during complex, multi-step operations.

The core issue was that the AI was operating without a safety net. We were relying on the LLM to never trip, instead of building a world where tripping isn’t fatal.

Harness engineering redefines the agent environment

Harness engineering shifts the focus from optimising the model’s prompts to architecting a highly opinionated, defensive scaffolding around the model. In an infrastructure context, we are building a neuro-symbolic system: the LLM handles the “fuzzy” logic (interpreting metrics, reading context, planning), while deterministic code handles the physics and boundaries of the environment.

Here is how we completely overhauled our EKS cost-optimisation agent using this approach:

1. Opinionated tools vs. open APIs

Instead of giving the agent raw kubectl access, the harness exposes strictly typed, domain-specific actions.

For instance, we may use a tool called propose_resource_adjustment(deployment, new_cpu, new_mem). Under the hood, this tool contains deterministic if/else logic written by human SREs:

- if new_mem < 256Mi: reject

- if resource_reduction > 30%: trigger_circuit_breaker

The paradigm shift: These if/else rules are no longer trying to make the decision (which leads to rule explosion in traditional automation). Instead, they act as the unbreakable laws of physics in the agent's sandbox. The agent plans the route; the harness ensures it doesn't drive off a cliff.

2. Shadow Execution and Dry-Runs

In our harness, an agent's decision is never applied directly to production.

When the agent proposes an optimisation, the harness intercepts it and runs a “kubectl apply --dry-run=server” against the cluster to validate syntax and admission controllers.

The harness packages this deterministic data into a structured report and feeds it back to the agent: “Your proposed change passed syntax checks. It will save $450/month but will cause 4 pod restarts. Confirm execution?”

3. The error feedback loop

When an agent inevitably makes a mistake – such as trying to provision a “t3.large” instance in an AWS availability zone that is currently out of capacity – the harness does not crash.

Instead, the harness catches the native AWS exception, translates it into standard, context-rich natural language, and returns it to the agent as a tool response: “Action failed: InsufficientInstanceCapacity in us-east-1a. Please select an alternative instance type.” This transforms fatal errors into learning opportunities, allowing the agent to dynamically replan and self-correct without human intervention.

The key difference between harness engineering and rules-based systems

The distinction between harness engineering and older rule-based systems became clear during our work on agentic infrastructure optimisation. Unlike older systems, which rely on a potentially unmanageable “rule explosion” (e.g., “if date is not PRIME_DAY AND cpu_usage_is_low AND is_not_critial_service…”), optimisation strategy is no longer determined by exhaustively defined rules.

In harness engineering, the “if/else” logic within our defined tools and sandbox merely represents “physical rules”. We are not dictating the simulation's outcome (like how a falling object simulates); instead, we impose constraints to prevent the system from violating fundamental laws, such as Newton’s universal law of gravitation.

FLock.io now prioritises the strength of the harness

By adopting harness engineering, FLock.io stopped treating AI agents as human replacements that need to be trained to perfection. Instead, we treat them as powerful reasoning engines operating within a highly controlled, fault-tolerant operating system.

In production infrastructure, reliability should come from the strength of the system and its guardrails, not just from how smart the model is. It must depend on the strength of your “harness”.

More about FLock.io

FLock.io is an AI research and infrastructure company pioneering enterprise-grade federated learning and distributed AI solutions. Its decentralised federated learning architecture and production-ready platforms (AI Arena, FL Alliance, and FLock API Platform) enable organisations to train and deploy their own custom AI models on local hardware while maintaining full data privacy, model ownership, and regulatory alignment by design.

[ 👋 Hi there! If you’re here to find out more about FLock.io, follow us on X, read our docs, sign up to AI Arena on train.flock.io and email us at hello@flock.io. We just launched FOMO, completing our full-cycle decentralised AI platform.]